So I’m not super familiar with Discord, but Elo Predicts! is, and as a result we have a Squiggle Discord server. What will it be used to discuss? I don’t know. But here is an invite link: https://discord.gg/2ac6SBRnDD

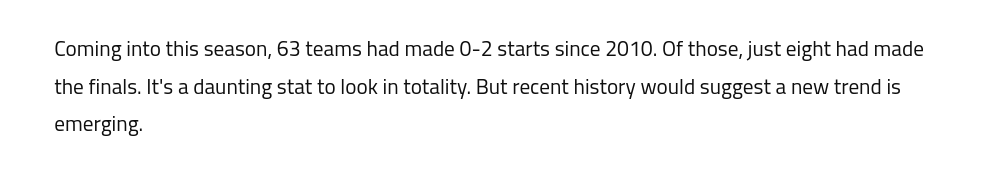

Introducing Power Rankings

Last week, in the Squiggle models group chat – of course there’s a group chat – Rory had a good idea:

It turned out that everybody had data on hand for this, because if you have a model, you also have a rating system. So I began collecting this, and now there’s a page to view it.

There’s also a widget here on the site, to the right of this post, or else above it.

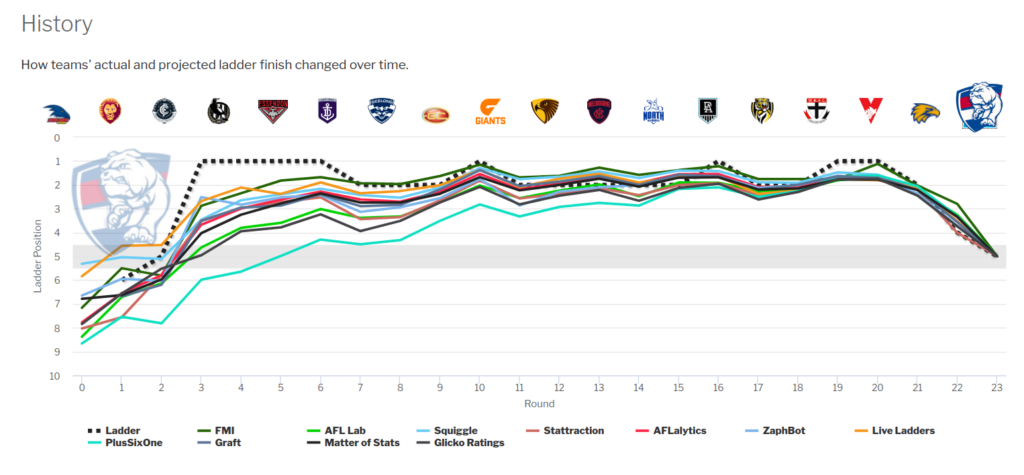

On the main page, you can see how ratings change over time, and compare ratings from different models.

Power Rankings measure team strength at a point in time. They ignore the fixture, home ground advantage, and all the other factors that go into predicting the outcome of a match or a season. Instead, they’re a simple answer to Rory’s question: Which teams are actually good?

The Curse of the Curse

I enjoy a useless AFL stat as much as the next person, but this kind of thing tests me:

“Curse” is a bit of a tell in footy. It usually means “coincidence.” If it was a real effect, we’d have a decent theory about why. People love to invent theories. There’s no effect we won’t try to pair with a cause, no matter how thin the evidence. When there’s an effect and no cause, I tend to doubt it’s due to the spooky unseen hand of an unnamed force.

Usually a “curse” is an odd stat that, at first glance, seems like it can’t be the result of random chance, but that’s only because we don’t understand randomness. Our gut tells us that flipping five heads in a row is basically impossible, for example, when in fact true randomness tends to contain a lot more natural variation than people think. (You can flip ten heads in a row, if you’re willing to toss coins for a few hours, and people will think you’re a magician.)

Here’s the 0-2 stat:

I have a few problems with this.

First, I have to point out it’s technically wrong, because we’ve had nine finalists from 0-2, counting Carlton in 2013 who were elevated from ninth after Essendon’s disqualification.

But more importantly, the underlying effect sounds suspiciously like “It’s harder to make finals if you lose games.” And we knew that already. Is there anything magical about the first two games? Because if not, it’s just saying that dropping games hurts your finals chances.

Then there’s two snipes: the starting point (2010), and the number of games (2). If there’s a genuinely interesting effect here, and not a coincidence, we should expect to see not-quite-as-dramatic-but-still-suggestive numbers when those key numbers are varied a little.

Instead, it vanishes pretty abruptly. If you look at a longer time period, you see about 20% of 0-2 teams making finals, and if you look at 0-1 or 0-3 or 0-4 teams, the numbers again are about what you’d expect: about one-third of 0-1 teams make it, about one-in-ten 0-3 teams, and only Sydney 2017 has made it from 0-4 this century. So the more games you lose, the harder it is to make finals, in a steady and predictable way.

Because what actually happened here – the whole reason this stat became popular – is that between 2008 and 2016, there was a patch where only two 0-2 teams made finals (Carlton 2013 and Sydney 2014). This hit rate was quite a bit lower than the years before and after, although not wildly so:

| Year | Finalists from 0-2 |

| 2000 | 2 out of 5 |

| 2001 | 1 out of 5 |

| 2002 | 1 out of 5 |

| 2003 | 1 out of 3 |

| 2004 | 2 out of 4 |

| 2005 | 0 out of 3 |

| 2006 | 2 out of 3 |

| 2007 | 1 out of 4 |

| 2008 | 0 out of 4 |

| 2009 | 0 out of 4 |

| 2010 | 0 out of 6 |

| 2011 | 0 out of 4 |

| 2012 | 0 out of 5 |

| 2013 | 1 out of 7 |

| 2014 | 1 out of 6 |

| 2015 | 0 out of 5 |

| 2016 | 0 out of 4 |

| 2017 | 1 out of 8 |

| 2018 | 1 out of 4 |

| 2019 | 1 out of 5 |

| 2020 | 1 out of 4 |

| 2021 | 3 out of 5 |

Eyeballing that, you might notice something else about the middle years: There are more 0-2 teams. And indeed we had a number of clubs at historical lows in this period, including two teams who were introduced to the league. Fourteen of those 0-2 non-finalists from 2008-2016 are actually just four clubs failing over and over: the two expansion teams plus Melbourne and Richmond.

So this always looked a fair bit like random variation plus an unusually weak bottom end of the comp. But somehow it gave birth to a “curse” that meant flag contenders couldn’t afford to drop their second game.

And now that regular service has resumed – implying that there was never much to see in the first place – “a new trend is emerging.”

Ladders of Future Past

You can now use the ladder predictor on seasons as far back as 2000. Relatedly, the Squiggle API now serves fixture info on games dating back to 2000, and you can also use it to get a list of which teams were playing in any of those years.

You might be wondering why you’d ever want to predict past ladders. To be honest, I’m not sure. I just know that people write in sometimes asking if the site can let them do that.

This particular addition was triggered by Jake, who emailed me to say he’d been in iso for a month, and he kept busy by re-entering past seasons into the predictor one game at a time to see how the ladder changed. Jake had done this for 2011-2022, but wanted to go back further.

So now you can. I am all about football as a mental escape from reality, Jake. That’s the best possible use of football.

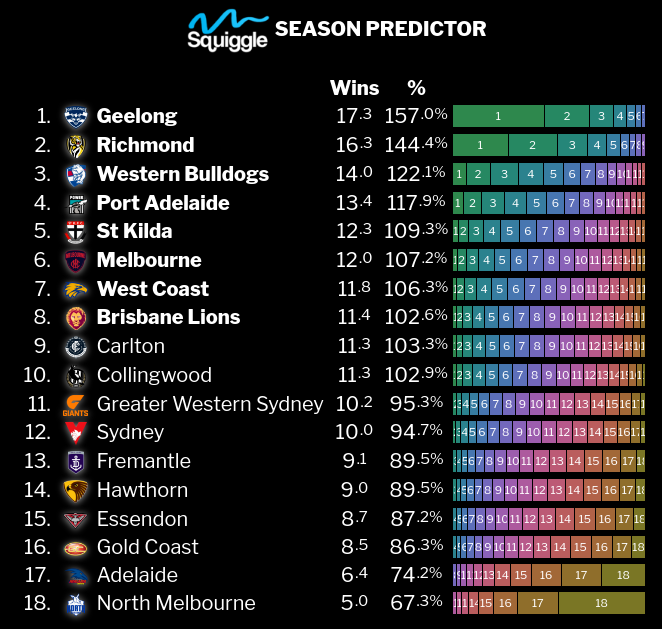

Season 2022 loaded

All the Squiggle goodies are now updated to support the new season:

The Squigglies 2021: Pre-Season Ladders

Heading into 2021, there was a bit of hive mind syndrome going around:

So everybody had Richmond way too high, and Melbourne, Sydney and Essendon too low. Collingwood were generally tipped for somewhere around mid-table, often pushing into the Eight, as were St Kilda.

This same-same field of predictions delivered neither a spectacularly good nor spectacularly bad ladder. Instead, everyone was just kind of okay. The average was better than just tipping a repeat of 2020, but not by much.

Every 2021 Expert Preseason Ladder Rated

Best Ladder: Daniel Cherny

All year long, the Western Bulldogs looked a deserving top 2 team. Then they plunged from 1st to 5th in the final three rounds, upending a lot of ladder predictions along the way. A benefactor was Daniel Cherny, who’d tipped them for 6th, and suddenly had the best projection out of anyone. He had 6 of the Top 8, missing Sydney & Essendon for Richmond & St Kilda, and half the Top 4. He also wisely tipped Collingwood to fall further than most (although not as far as they actually did).

Runner-Up: Sarah Black

Best Ladder by a Model: The Flag (6th overall)

After coming second in this category last year, this was a great performance by The Flag, nailing three out of the Top 4, with Richmond the only miss.

Honourable Mention: AFLalytics (8th overall)

Lifetime Achievement Award: Peter Ryan

Of the 26 experts and models I’ve tracked for three consecutive years, Peter has the best record, averaging 65.03 points across that period. He’s been getting better, too, finishing 19th in 2019, 9th in 2020, and 3rd this year.

Honourable Mention: Squiggle (5th in 2019, 20th in 2020, 9th in 2021)

Mid-Season Predictions

If you’re interested in how models predicted the final ladder during the season, head on over to the Ladder Scoreboard. New model Glicko Ratings scored best this year, while as usual all models significantly outperformed the actual ladder.

Ninety-nine percent

via maxbarry.com

If you do one thing each day that has a 99% survival rate, you’ll likely be dead in under ten weeks. If boarding a plane had a 99% survival rate, a typical flight would end by carting off at least one passenger in a body bag, perhaps two or three. Ninety-nine sounds close enough to 100, but anything with a 99% survival rate is incomprehensibly dangerous.

Go sky-diving, and you’re over two thousand times safer than if you were doing something with a 99% survival rate. Driving, the most dangerous everyday activity, requires you to clock up almost a million miles of travel before you’re only 99% likely to survive. Even base jumping, perhaps the single most dangerous thing you can do without actively wanting to die, is twenty-five times safer than anything that carries a 99% survival rate.

Ninety-nine bananas is essentially one hundred bananas. Ninety-nine days is practically a hundred days. But 99% is often not even remotely close to 100%. It feels like similar numbers should lead to similar outcomes, but the difference in life expectancy between 99% and 100% survivable daily routines isn’t one percent: It’s ten weeks versus immortality.

It’s simple enough to calculate the probability of more than one thing happening: You just multiply the individual probabilities together. The likelihood of surviving for three days, for example, while doing one thing per day with a 99% survival rate, is 0.99 x 0.99 x 0.99 = 0.9703, or 97.03%.

But we find this deeply counter-intuitive. We prefer to think in categories, where everything can be labeled: good or bad, safe or dangerous, likely or unlikely. If we have an appointment and need to catch both a train and a bus, each of which have a 70% chance of running on time, we tend to consider both events as likely, and therefore conclude that we’ll make it. The actual likelihood that both services run on time is 0.70 x 0.70 = 0.49, or only 49%: We’ll probably be late.

We also prioritize feelings over numbers. Here’s a game: Pick a number between 1 and 100, and I’ll try to guess it. If I’m wrong, I’ll give you a million dollars. If I’m right, I’ll shoot you dead. Would you like to play?*

Most people won’t play this game, because the thought of being shot dead is too scary. It’s shocking and visceral, so when you weigh up the decision, both potential outcomes balloon in your mind until they feel roughly equal, as if the odds were 50/50, rather than one being 99 times more likely than the other.

But put the same game in a mundane context — if instead of being shot, you get COVID, and instead of a million dollars, you just go to work as usual — and we tend to return to categorical thinking, where the dangerous-but-unlikely outcome is filed away as too improbable to be worth thinking about. As if close to 100% is close enough.

Between 99% and 100% lies infinity. It spans the distance between something that happens half a dozen times a year and something that hasn’t happened once in the history of the universe. With each step we take beyond 99%, we cover less distance than before: 1-in-200 gets us to 99.50%, then 1-in-300 to 99.67%, then 1-in-400 only to 99.75%. We’ve quadrupled our steps, but only covered three-quarters of the remaining distance. We can keep forging ahead forever, to 1-in-a-thousand and 1-in-a-million and beyond, and still there will be an endless ocean between us and 100%.

You have to watch out for 99%. You have to respect the territory it conceals.

* I pick 73.

Ladder Prediction 2021

You know what, too many people are doing half-arsed ladder predictions. By which I mean, they’ll only tip the top 8, or give a range of possible finishing values, or say who will rise and who will fall but not by how much.

That’s garbage, people. Yes, it’s difficult. Sure, nobody will ever get it just right. You can still have a crack, and let me measure it.

Here is Squiggle’s prediction for 2021. No really hot takes this year, and it’s going to be a tough one after an unusual 2020. But this is the model’s attempt after factoring in off-season movements, long-term injuries, and preseason form (yes, that one practice match).

A Novelist’s Guide to Suspense in Football

For my day job, I write novels. One of my favourite story-telling elements is suspense, which is responsible for the feeling that you can’t put the book down because you want to see what happens next. Suspense is a huge part of sport, too, and so I decided to break down AFL Australian Rules Football from a story-telling perspective, to see what it does right and wrong.

The first thing to note about suspense is that it’s kind of unpleasant to experience. It makes us feel tense, and we generally don’t want to feel tense. But we’ll willingly subject ourselves to it when we know there’s an emotional payoff at the end, and our tension will be resolved into another feeling (joy, usually, but not always).

Logically, we might want to skip the unpleasant part and jump straight to the payoff, but of course it doesn’t work that way: We can’t read the last chapter of a book, or watch the final scene of a movie, and feel the same emotional impact. A big part of the payoff is the feeling of release, and if we haven’t been stewing in tension, there’s nothing to release from.

Feeling tense is also a good sign that a story is accomplishing its most basic purpose, by the way, which is to sustain your interest. It’s not the only way to do that, but if you feel tense, you must care about what’s happening, so the story is at least getting that right.

So, as a writer, I’m a fan of suspense. But it’s a little dangerous, because of the aspect I mentioned before, that tension is unpleasant. This puts the author on the hook to deliver an emotional payoff that makes the tension worthwhile – otherwise readers will feel frustrated and annoyed, even if they can’t quite articulate why.

Ideally, readers want a joyful payoff: good people succeeding, bad people getting what they deserve. But other emotions are usually fine, too–horror, despair, surprise. It’s not essential that the payoff is positive; it’s only essential that it exists. After making people feel tense, you have to let them feel something else.

Football is exceptionally good at suspense. Here’s why:

- The situation can change rapidly. That’s where suspense comes from: the knowledge that things are about to change in an important way. The greater the difference between possible outcomes – when it might be very good but might also be very bad – and the more imminent the change is, the more tension we feel. When a situation is static, there’s no suspense. Nor is there much when the coming change is predictable or irrelevant. But when everything is on the line and will be irrevocably resolved either one way or the other at any moment, that’s peak suspense.

- Tension is resolved quickly and cleanly. Our team scores or is scored against; it wins or loses. This unambiguous resolution into one of two polar opposite outcomes is really wonderful, and hard to craft in fiction. Even when the emotional payoff is negative – our team concedes a goal or loses the match – it’s delivered emphatically, so there’s closure. Knowing in advance that we’ll get that clear resolution is important, too, allowing us to surrender to the experience.

- The amount of tension varies during a game. Tension is exhausting. Too much for too long and we’ll withdraw emotionally to protect ourselves. So tension should build and subside, multiple times, before being resolved – and that’s what we get from a football match, with the ebb and flow of scoring opportunities, and then a final outcome.

- The suspense is natural, not forced. A story can fake up some cheap suspense by pausing to deliberately draw out the moment of reveal. You might be able to think of a few books or shows that did this, and you probably found them annoying, because you became aware that someone was deliberately making you feel tense (which is unpleasant!) just for the sake of it. But in football, suspense is organic, the natural result of the play, which allows us to remain compelled.

- The resolution matters. We usually have a preference for one team, which means, in simple terms, that there are good guys and bad guys, and we’ll feel differently depending on who prevails.

Of course, all this is more true of some games more than others. When there’s a blowout, the outcome becomes predictable, and there’s less tension. And dead rubbers are hard to care about, even close ones, because the result won’t change anything.

Now you might notice that all of these excellent positives are basic elements of the game. In fact, they’re true of most sports. (And other things, like gambling.) That’s no coincidence; sport’s ability to generate a good suspenseful experience is, no doubt, a key factor in why we enjoy it.

As we turn to the negatives, though – the ways in which football does suspense wrong – we’ll be mostly looking at modern inventions. Because, at least in story-telling terms, what we’ve done to football is mostly screw it up.

Bad Suspense #1: The Goal Review

Woo boy.

To be fair, let’s declare up front that the Goal Review isn’t supposed to create suspense. It’s supposed to reduce umpiring error. In a little while, I’ll attempt to convince you that this is far less important than it seems. But first let’s just tally up the damage it does to good suspense.

The Goal Review attacks the moment of resolution: the instant that tension turns into something else (ecstasy, despair). A football match lasts for a couple of hours but has a relatively small number of key moments, where tension is spiking because the play may be about to result in an important goal. These moments are an immensely valuable opportunity to reward the audience by releasing the tension they’ve built up.

Here are two goals by the same player: ex-Tiger, new Saint Jack Higgins. First, a regulation goal. It’s worth turning on sound so you can hear how crowd noise lifts whenever the likelihood of change rises, ebbs when the situation becomes static, and peaks in the moment of resolution.

All this is good. Tension rises and subsides, spikes and is cleanly resolved. It’s satisfying. Even opposition fans, hoping for no goal, receive a sharp, clean emotional response – which is fine, because good story is not about the good guys always getting their way; we all understand that.

Now another Jack Higgins goal. This time, the crowd noise rises as he’s about to goal… but wait! The goal umpire appears indecisive. The crowd’s engagement dies. Soon the field umpire calls for a review. There’s some booing, and although the commentators get excited, the crowd is restless and unhappy as they wait for a result.

The part after that, where the video review gets it wrong, is not really the problem here. The problem is everything that happened before, where the moment of emotional payoff was stretched out until it disappeared.

Of course, a bad goal review is especially unpopular. So, to be fair, here’s peak ARC, detecting a fantastic goal that would have been missed. It can’t get any better than this:

Yet even here, the crowd reaction reveals that this an overwhelmingly negative experience (and not just because of partisan fans – other St Kilda goals from the same match receive a more typical response).

It’s unsatisfying for fans on both sides because the Goal Review tells us that the tension we just resolved is actually getting resolved the other way, in retrospect. In storytelling terms, this is a little like an after-credits scene where the bad guy turns out to be not dead after all. Even when it’s the result you wanted, it’s not satisfying and it doesn’t feel right.

So first, we have the emotional resolution being stretched out from a single moment (great!) to a minute or two (awful). The crowd’s tension turns into the bad, self-aware kind, where they know they are subjected to an artificial pause and nothing is actually happening. The sharp emotional peak is gone; instead, we have a valley of frustrated waiting between two low hills.

Second, the act of resolution shifts from the field to the scoreboard, where the audience has to look to see which word will be flashed up on the screen. This strikes me as like the hero going home after fighting the bad guy and waiting for a phone call to confirm whether he won.

Third, no goal is safe! The audience can’t safely celebrate (or grieve) any goal unless and until it becomes absolutely clear that it won’t be reviewed. The mere threat of a review can turn quick, satisfying resolutions into slow, frustrating ones.

Here are a few more footballing crimes against suspense:

Bad Suspense #2: Deliberate Out of Bounds

This rule creates a period of two or three seconds – sometimes more – where the crowd realizes a potential infringement is about to occur but must wait for the umpire’s judgement. This is quite a lot longer than other infringements, since we need (a) the ball to finally dribble over the line, (b) the whistle to be blown – which will occur regardless of whether there’s an infringement, and thus convey no useful information – and (c) the umpire to run in and perform a signal.

Again, we’ve lost a quick, natural resolution, and have instead the artificial, annoying form of tension, where we’re being made to wait for a decision. It’s exacerbated here by the Deliberate Out of Bounds rule’s infamous ambiguity, since it’s hard to guess what the umpire will decide. And, again, it shifts our focus from the players, who we want to watch, to the umpires, who we don’t.

Bad Suspense #3: The Rushed Behind

Why, exactly, players are permitted to boop the ball over the line here but not elsewhere around the boundary line, where it matters less, is honestly beyond me.

But anyway. We have a crescendo moment where the stakes are at their highest… but there’s an escape hatch, a special pathway to the most anti-climactic of results. This is like a showdown between the hero and the antagonist that gets called off at the last minute.

Also – not that this has anything to do with suspense – it’s less satisfying to watch someone succeed through dumb luck rather than effort, wit, daring or skill. That is, in fact, the antithesis of what sport is supposed to be about. It’s perverse to incentivize world-class athletes to act like bumbling fools. If we want to watch people failing to control a football, we already have our local park.

Bad Suspense #4: The Hit Post

Most obviously, if we stopped caring about whether the ball brushed the goal post on its way through, we wouldn’t need so many goal reviews.

But beyond this, the rule that declares the ball dead when it hits the post and bounces back into play robs us of a suite of rare but shocking twist moments, where everything is suddenly transformed. I won’t go on about this, because I know it’s too radical a change for many people. But in narrative terms, it’s an amazing opportunity. And it’s natural: It’s what would happen if we hadn’t specifically created a rule to outlaw it. But because we have created that rule, we require a goal review whenever the ball approaches a post.

So that’s not great. If you only cared about suspense, you would fix those four things as a priority. And you might do it like this:

- No goal reviews. The umpire’s decision is final. That’s it.

- Deliberate Out of Bounds: Instead of asking umpires to guess whether a player intended to send the ball out of play, ask whether another player could have touched it first. It no longer matters who intended what. Terrible accidental kicks that run straight out of bounds are penalized. In the vast majority of cases, the crowd can immediately tell whether a free kick will or won’t be paid, even before the ball goes out of play.

- No special exemption for rushing behinds. When the ball is on the line, it’s do or die.

- We stop caring whether the ball brushes the post. If it goes through, it’s a score. If it bounces back, it’s play on. If it’s a Grand Final and the team is down by one point and a kick after the siren bounces back, then wow, that was some amazingly bad luck, which people will never forget.

Of course, we don’t only care about suspense. We also care about fairness. And that’s essentially why these rules exist: to make the sport fair.

Which sounds eminently reasonable, because fairness is at the heart of sport. But I want to dig into that a little. Because there are different kinds of fairness, and some are more important than others:

- Teams must engage on a level playing field, with the same opportunities. This type of fairness is non-negotiable. Without it, we don’t have sport at all, but instead something like Wrestlemania: lots of drama, no integrity. Sport can’t tolerate cheating or entrenched advantage without surrendering part of its soul.

- Victory should be determined by the performances of the players, not luck, such as in the form of umpiring errors. This is surely true. But unlike the first point, it doesn’t need to be absolutely true. In fact, it can’t be absolutely true, and sport would be worse if it were. Luck is inherent in the bounce of the ball. And while too much luck is bad – we want to watch players testing their athletic limits, not a roulette wheel – so is too little, lest we wind up with predictable matches that are divorced from the real world, where sometimes people really are done in by forces beyond our control. Yes, bad luck can be devastating, and feel like a wrong that must be righted, but it’s also natural. Football is partly an analogy for our lives, and without the danger of a truly unlucky catastrophe, that analogy becomes shallower.

I realize this may sound contentious, especially for people who don’t routinely invent stories where bad things happen to good people. But luck isn’t the ultimate enemy. When we believe it must be eliminated at all costs, we can actually damage parts of the game that matter more. - Players shouldn’t be penalized for honest mistakes. This is a self-evidently ludicrous idea, in my opinion, but it’s why we have the Deliberate Out of Bounds rule, the Rushed Behind Rule, and the Hit Post rule, so here we are. This is a corrupted idea of fairness that leads to unfair outcomes in practice, such as rewarding players who successfully deceive umpires.

So there you go. Football is still pretty great at generating suspense. But we’re undercutting it in the name of types of fairness that don’t actually matter much. As we enter the off-season, and prepare for the annual round of which-rule-will-they-change-next, I hope that less attention will be paid to fairness, and more to making it a satisfying experience to watch it.

Max Barry is the author of seven novels including Lexicon (Top 10 Books of 2013 – Time Magazine), Jennifer Government (New York Times Notable Book 2003) and The 22 Murders of Madison May, to be published mid-2021.